Linear Regression With Categorical Independent Variables

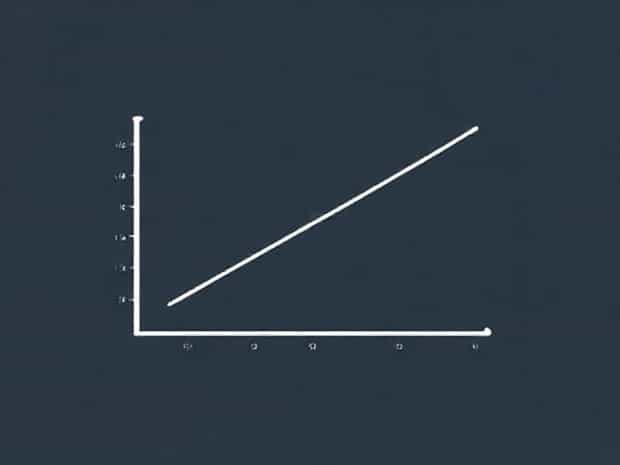

Linear regression is a fundamental statistical technique used to model the relationship between a dependent variable and one or more independent variables. While it is commonly applied with continuous independent variables, real-world data often include categorical independent variables, such as gender, education level, or geographical region. Incorporating these categorical predictors into a linear regression model requires careful handling, as standard regression assumes numerical input. Understanding how to use categorical independent variables effectively enhances the flexibility and applicability of linear regression in analyzing diverse datasets.

Understanding Categorical Independent Variables

Categorical independent variables, also known as qualitative variables, represent data that can be grouped into distinct categories rather than numerical values. Examples include marital status (single, married, divorced), industry type (technology, healthcare, education), or color preferences (red, blue, green). Unlike numerical variables, categorical variables do not have an inherent order, unless they are ordinal, which affects how they are included in regression models.

Types of Categorical Variables

- Nominal VariablesThese categories have no intrinsic order. For example, hair color or city names are nominal.

- Ordinal VariablesThese categories have a defined order, such as education level (high school, bachelor, master, doctorate) or satisfaction ratings (low, medium, high).

Recognizing the type of categorical variable is crucial because it influences the coding method and interpretation of the regression coefficients.

Incorporating Categorical Variables in Linear Regression

To include categorical independent variables in linear regression, they must be converted into numerical form. This is typically done using a process called dummy coding or one-hot encoding. The key idea is to create binary variables for each category, allowing the regression model to interpret categorical distinctions numerically.

Dummy Coding

Dummy coding involves creating new binary variables for each category of the categorical variable, except for one reference category. The reference category serves as a baseline against which other categories are compared. For example, if a variable represents three regions (North, South, West), two dummy variables might be created

- Region_South1 if the observation is from the South, 0 otherwise

- Region_West1 if the observation is from the West, 0 otherwise

The North region serves as the reference category. Regression coefficients for the dummy variables indicate the expected difference in the dependent variable compared to the reference category, holding all other variables constant.

Interpreting Coefficients

When categorical variables are included in a linear regression model, interpretation changes slightly from continuous predictors. The coefficient of a dummy variable represents the difference in the dependent variable between that category and the reference category. For ordinal variables, regression coefficients can indicate trends across the ordered categories, though care should be taken to ensure linear assumptions are reasonable.

Practical Steps for Linear Regression with Categorical Variables

In practice, the following steps are commonly used to incorporate categorical independent variables into a linear regression analysis

- Identify Categorical VariablesDetermine which independent variables are categorical and their type (nominal or ordinal).

- Create Dummy VariablesFor each categorical variable, generate binary variables for all categories except the reference category.

- Fit the Regression ModelInclude both continuous and dummy-coded categorical variables in the linear regression model.

- Interpret ResultsEvaluate coefficients for dummy variables, comparing them to the reference category to understand their impact on the dependent variable.

- Check Model AssumptionsEnsure linearity, homoscedasticity, and independence assumptions are met, as categorical variables do not inherently violate these assumptions but can influence residual patterns.

Example Scenario

Consider a study aiming to predict house prices based on square footage (continuous) and neighborhood (categorical). Neighborhood has three categories Uptown, Midtown, and Downtown. Using dummy coding, two variables are created Midtown (1 if Midtown, 0 otherwise) and Downtown (1 if Downtown, 0 otherwise). Uptown becomes the reference category. If the regression coefficient for Midtown is 20,000, this indicates that houses in Midtown are, on average, $20,000 more expensive than Uptown houses, controlling for square footage.

Handling Multiple Categorical Variables

Linear regression can accommodate multiple categorical variables simultaneously. Each categorical variable is dummy-coded separately, and the resulting dummy variables are included in the regression model. It is important to avoid the dummy variable trap, which occurs when all categories are included without a reference category, leading to multicollinearity. Careful selection of reference categories ensures the model remains stable and interpretable.

Interaction Effects

In some cases, the interaction between categorical variables or between categorical and continuous variables can provide valuable insights. Interaction terms allow the model to capture how the effect of one variable depends on the level of another. For example, the effect of a neighborhood on house price may differ based on the number of bedrooms. Including interaction terms requires additional dummy variables and careful interpretation of coefficients.

Advantages and Considerations

Incorporating categorical variables in linear regression expands the model’s applicability to real-world scenarios where predictors are not purely numerical. However, researchers should consider several points

- InterpretabilityCoefficients must be interpreted relative to the reference category.

- Model ComplexityMultiple categorical variables with many categories can increase the number of predictors, potentially leading to overfitting.

- Data BalanceUneven representation of categories may affect coefficient estimates and standard errors.

- Ordinal VariablesTreating ordinal variables as continuous may sometimes be reasonable but should be justified by linearity assumptions.

Linear regression with categorical independent variables is a powerful tool that allows researchers to include qualitative factors in their predictive models. By converting categories into dummy variables and carefully interpreting coefficients relative to reference categories, analysts can gain insights into how categorical factors influence a dependent variable. Whether applied to market research, social science studies, or economic forecasting, understanding how to handle categorical predictors enhances the flexibility and accuracy of linear regression. Proper coding, thoughtful selection of reference categories, and consideration of interactions and model assumptions ensure that the inclusion of categorical variables strengthens the analysis rather than introducing confusion or bias. Mastering these techniques is essential for anyone seeking to perform comprehensive regression analysis on real-world datasets where both numerical and categorical information coexist.