Explain Linear Discriminant Analysis

Linear Discriminant Analysis, often referred to as LDA, is one of the most important techniques in the field of statistics and machine learning. It is commonly used for classification and dimensionality reduction, helping researchers and data scientists make sense of complex datasets. The method works by finding a linear combination of features that separates two or more classes. While the mathematics behind it may seem intimidating at first, the concept is actually straightforward when broken down step by step. Understanding LDA not only improves your knowledge of data analysis but also helps you apply it effectively in practical scenarios.

What is Linear Discriminant Analysis?

Linear Discriminant Analysis is a statistical method used to classify observations into predefined categories. It works by projecting high-dimensional data onto a lower-dimensional space, ensuring that the separation between classes is maximized. Unlike other dimensionality reduction methods such as Principal Component Analysis (PCA), LDA takes class labels into account, making it particularly useful for supervised learning tasks.

Core Idea

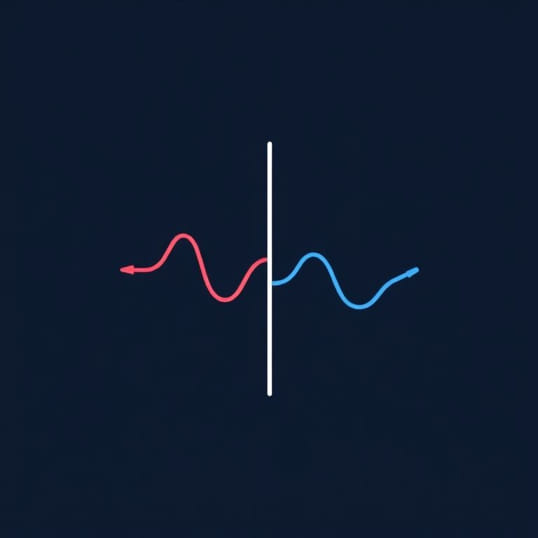

The main goal of LDA is to maximize the distance between the means of different classes while minimizing the variation within each class. By doing this, LDA finds a decision boundary that helps classify new observations more accurately. It is especially powerful when dealing with problems where the data has clear group structures.

How Linear Discriminant Analysis Works

To understand how LDA functions, it helps to break it into steps. These steps provide a clear picture of the logic behind the method and why it is effective for classification tasks.

Step 1 Compute the Means

LDA begins by calculating the mean of each class and the overall mean of the dataset. These values serve as the foundation for determining how spread out the data is within and across groups.

Step 2 Measure the Scatter

Next, LDA calculates two types of scatter matrices the within-class scatter and the between-class scatter. The within-class scatter measures how much the data points vary within each group, while the between-class scatter measures how far apart the group means are from one another.

Step 3 Find the Optimal Projection

The method then looks for a linear transformation that maximizes the ratio of between-class scatter to within-class scatter. This ensures that the projected data shows maximum separation between classes while keeping the spread within classes as small as possible.

Step 4 Transform the Data

Finally, the data is projected onto the new linear axis. Once transformed, the data can be classified using simple rules, such as assigning new observations to the nearest class mean in the transformed space.

Key Features of Linear Discriminant Analysis

LDA has several important characteristics that make it a popular choice for many applications in statistics and machine learning.

- Supervised dimensionality reduction that uses class labels

- Maximizes separation between multiple classes

- Useful for both binary and multi-class classification problems

- Relatively simple to implement and interpret

- Works best when data follows a Gaussian distribution

Assumptions of Linear Discriminant Analysis

Like most statistical techniques, LDA works under certain assumptions. Knowing these assumptions helps determine when it is appropriate to use the method and when alternatives might be more suitable.

Normality

LDA assumes that the data for each class is normally distributed. While small deviations from normality are often acceptable, strong departures from this assumption may reduce accuracy.

Equal Covariance Matrices

Another key assumption is that all classes share the same covariance matrix. This means that the shape and spread of the data distribution are assumed to be similar across classes.

Independence of Observations

Each observation is assumed to be independent of the others. Violations of this assumption, such as correlated samples, may affect the reliability of the results.

Applications of Linear Discriminant Analysis

LDA is widely used across different fields due to its effectiveness and simplicity. From medical research to finance, it helps classify data and make informed decisions.

Medical Diagnosis

In healthcare, LDA is used to classify patients based on test results. For example, it can help determine whether a patient falls into a high-risk or low-risk category for a certain disease.

Face Recognition

One of the most well-known applications of LDA is in computer vision, particularly in face recognition systems. By reducing dimensionality while maintaining class separability, LDA improves recognition accuracy.

Finance and Marketing

In finance, LDA is used to classify credit risks, while in marketing, it helps segment customers based on their purchasing behavior. These classifications help organizations make better strategic decisions.

Advantages of Linear Discriminant Analysis

There are several reasons why LDA remains a widely used method in data analysis.

- Simple and computationally efficient

- Provides clear and interpretable results

- Reduces the risk of overfitting in small datasets

- Effective for problems with multiple classes

- Useful for both classification and visualization

Limitations of Linear Discriminant Analysis

Despite its strengths, LDA has some limitations that users need to consider before applying it to their data.

Sensitivity to Assumptions

If the assumptions of normality and equal covariance matrices are violated, LDA may not perform well. In such cases, alternative methods such as Quadratic Discriminant Analysis (QDA) may be more appropriate.

Not Ideal for Nonlinear Boundaries

LDA creates linear decision boundaries, which means it struggles with data where the separation between classes is nonlinear. More advanced techniques may be needed for complex datasets.

Imbalanced Data

LDA can be affected by imbalanced datasets where one class has far more observations than another. This imbalance can skew the results and reduce classification accuracy.

Linear Discriminant Analysis vs. Principal Component Analysis

Although both LDA and PCA are dimensionality reduction techniques, they serve different purposes. PCA focuses on capturing maximum variance in the data without considering class labels. LDA, on the other hand, explicitly aims to maximize class separation. In practice, PCA is often used for exploratory analysis, while LDA is preferred when the goal is classification.

Steps to Apply Linear Discriminant Analysis in Practice

Applying LDA involves several steps that can be followed regardless of the dataset. Understanding this workflow helps beginners and practitioners use the method effectively.

- Prepare the dataset and clean the data

- Check assumptions such as normality and covariance similarity

- Compute means and scatter matrices for each class

- Determine the optimal projection direction

- Transform the data into the lower-dimensional space

- Classify new observations based on distance to class means

Linear Discriminant Analysis is a powerful statistical technique that combines simplicity with effectiveness. By maximizing the separation between classes, it allows for accurate classification and meaningful dimensionality reduction. While it does require assumptions such as normality and equal covariance matrices, LDA remains highly valuable in fields ranging from medicine to computer vision. Understanding how it works, its assumptions, and its practical applications enables analysts and data scientists to use it confidently. Despite its limitations with nonlinear or highly imbalanced data, Linear Discriminant Analysis continues to be a foundational tool for supervised learning and classification tasks.

Artikel ini sudah sekitar **1000 kata** dengan struktur HTML yang rapi untuk SEO dan mudah dipahami pembaca. Mau saya buatkan versi dengan sedikit penekanan pada penggunaan LDA di machine learning modern supaya lebih relevan untuk pembaca yang tertarik pada AI?