Concurrency Vs Parallelism Vs Multithreading

When developers and computer science learners start exploring performance optimization, three terms often come up concurrency, parallelism, and multithreading. While these words are sometimes used interchangeably, they have distinct meanings in the world of programming. Understanding the differences between concurrency vs parallelism vs multithreading is crucial for writing efficient software, scaling applications, and making the most out of modern processors. Without clarity, it is easy to confuse concepts and apply the wrong approach to problem-solving, which may lead to wasted resources and reduced system efficiency.

Defining concurrency

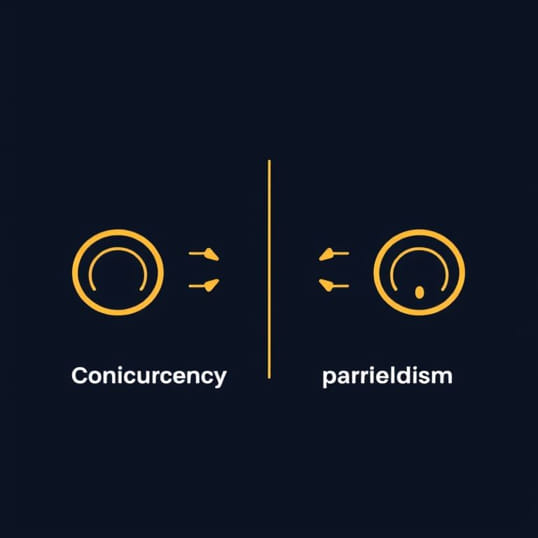

Concurrency refers to the ability of a system to handle multiple tasks at the same time conceptually. It does not mean tasks are executed simultaneously; instead, it allows tasks to make progress independently. Concurrency is about dealing with multiple things at once by managing them effectively. For example, an operating system running many applications at once is a form of concurrency because the CPU switches between them rapidly, creating the illusion that they are running at the same time.

Key characteristics of concurrency

- Focuses on managing multiple tasks efficiently.

- Does not guarantee simultaneous execution.

- Relies on task scheduling and switching.

- Improves responsiveness in systems like user interfaces or servers handling many requests.

Defining parallelism

Parallelism is the actual execution of multiple tasks at the exact same time. It requires hardware support, such as multiple CPU cores, to process tasks simultaneously. In parallelism, tasks are divided into smaller units and executed together, reducing total execution time. For instance, calculating large datasets or performing matrix operations can benefit greatly from parallelism because the workload can be split across multiple processors.

Key characteristics of parallelism

- Focuses on doing multiple tasks literally at the same time.

- Depends on hardware with multiple cores or processors.

- Improves performance for computation-heavy problems.

- Often used in scientific computing, data processing, and rendering tasks.

Defining multithreading

Multithreading is a programming technique where a process creates multiple threads to perform tasks. Each thread represents a lightweight unit of execution that shares the same memory space as the main process. Multithreading can enable both concurrency and parallelism, depending on how threads are scheduled and executed. For example, a multithreaded web server can handle multiple client requests concurrently, and on a multi-core machine, those threads may also run in parallel.

Key characteristics of multithreading

- Uses multiple threads within a single process.

- Threads share the same memory space, making communication efficient.

- Can provide concurrency on single-core systems and parallelism on multi-core systems.

- Widely used in applications requiring responsiveness, such as GUIs and network servers.

Concurrency vs parallelism vs multithreading explained

Although these terms are related, they focus on different aspects of execution. Concurrency is about dealing with many tasks at once conceptually. Parallelism is about running tasks physically at the same time. Multithreading is one way to implement both concurrency and parallelism, depending on the environment. For better understanding, consider a real-life analogy cooking in a kitchen.

- ConcurrencyYou prepare different dishes by switching between tasks, chopping vegetables for one dish, then stirring soup, then frying meat. You are not doing them simultaneously, but you are making progress on all.

- ParallelismYou and another cook prepare dishes at the same time on different stoves. The work is truly happening simultaneously.

- MultithreadingYou have multiple hands (threads) working on different tasks but sharing the same kitchen space and ingredients.

Practical examples in programming

Understanding concurrency vs parallelism vs multithreading becomes easier when we look at code and real applications.

Concurrency example

A chat application handling many user messages concurrently does not process them all at once. Instead, it quickly switches between conversations, giving the appearance that everything is happening simultaneously.

Parallelism example

A video encoding program can split a video into chunks and process them on multiple cores at once. The result is faster completion since tasks are executed simultaneously.

Multithreading example

A modern web browser uses multiple threads one for rendering the webpage, another for handling user input, and another for background tasks. This makes the browser more responsive.

Benefits of concurrency

Concurrency is useful when you want responsiveness and efficiency rather than raw speed. It allows applications to remain usable while performing long operations in the background. For example, in graphical user interfaces, concurrency prevents the program from freezing while loading data.

- Improves system responsiveness.

- Allows better resource utilization.

- Scales well for I/O-bound tasks like network communication.

Benefits of parallelism

Parallelism shines when the workload is computation-heavy and can be divided into independent tasks. It reduces the execution time by distributing work across processors.

- Speeds up computation-intensive applications.

- Makes use of modern multi-core processors.

- Ideal for data analysis, simulations, and rendering tasks.

Benefits of multithreading

Multithreading offers the best of both worlds by supporting concurrency and parallelism. It helps developers write programs that respond quickly while also utilizing hardware effectively.

- Supports both concurrent and parallel execution.

- Reduces overhead compared to creating multiple processes.

- Widely supported in many programming languages, such as Java, C++, and Python.

Challenges and drawbacks

While concurrency vs parallelism vs multithreading each bring advantages, they also introduce complexity and potential problems.

- Concurrency issuesRequires careful task management to avoid inefficiency.

- Parallelism issuesSome tasks cannot be divided, leading to diminishing returns.

- Multithreading issuesShared memory introduces risks like race conditions and deadlocks.

Best practices for developers

When deciding between concurrency, parallelism, and multithreading, developers should analyze the type of workload and the goals of the program.

- Use concurrency when you need responsiveness and the tasks are I/O-bound.

- Use parallelism when tasks are CPU-intensive and independent of each other.

- Use multithreading to combine concurrency and parallelism when appropriate.

- Always consider synchronization mechanisms to avoid errors when using threads.

Concurrency vs parallelism vs multithreading are core concepts in computer science that every developer should understand. Concurrency helps manage multiple tasks efficiently, parallelism executes tasks simultaneously for speed, and multithreading bridges the gap by providing both in practical applications. While they are related, each concept serves a different purpose and comes with unique advantages and challenges. By mastering when and how to use them, developers can build applications that are faster, more responsive, and better optimized for today’s hardware.